The biggest youth platform in the world is joining the Platform Power Coalition for a Digital Services Act that empowers young people. European Youth Forum will bring youth voices to the coalition, vindicating that digital rights are youth rights. Young people should be able to enjoy their digital environment without fearing privacy violations, discrimination or manipulation. Here is what you need to know about this alliance.

Platform Power Campaign

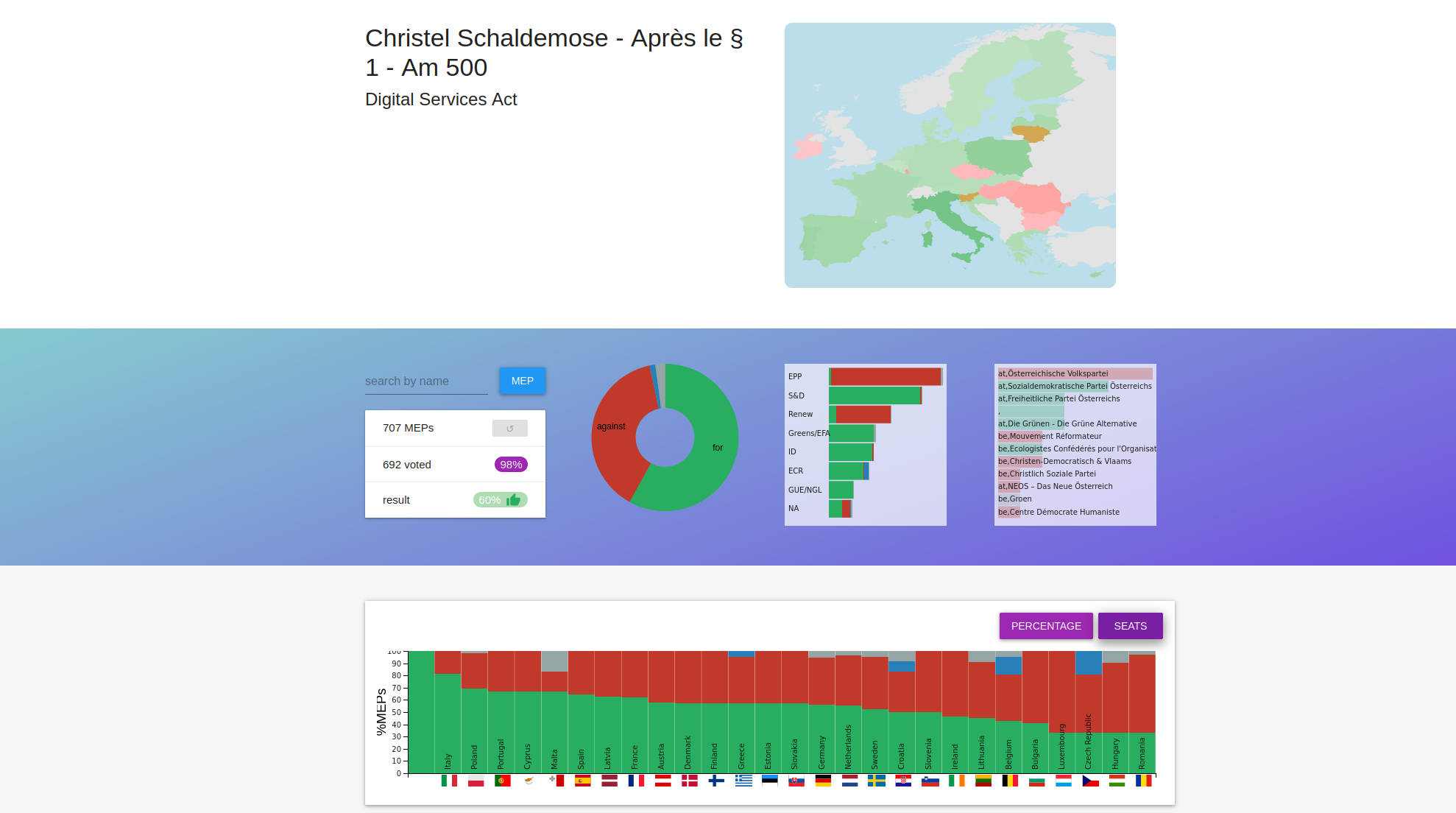

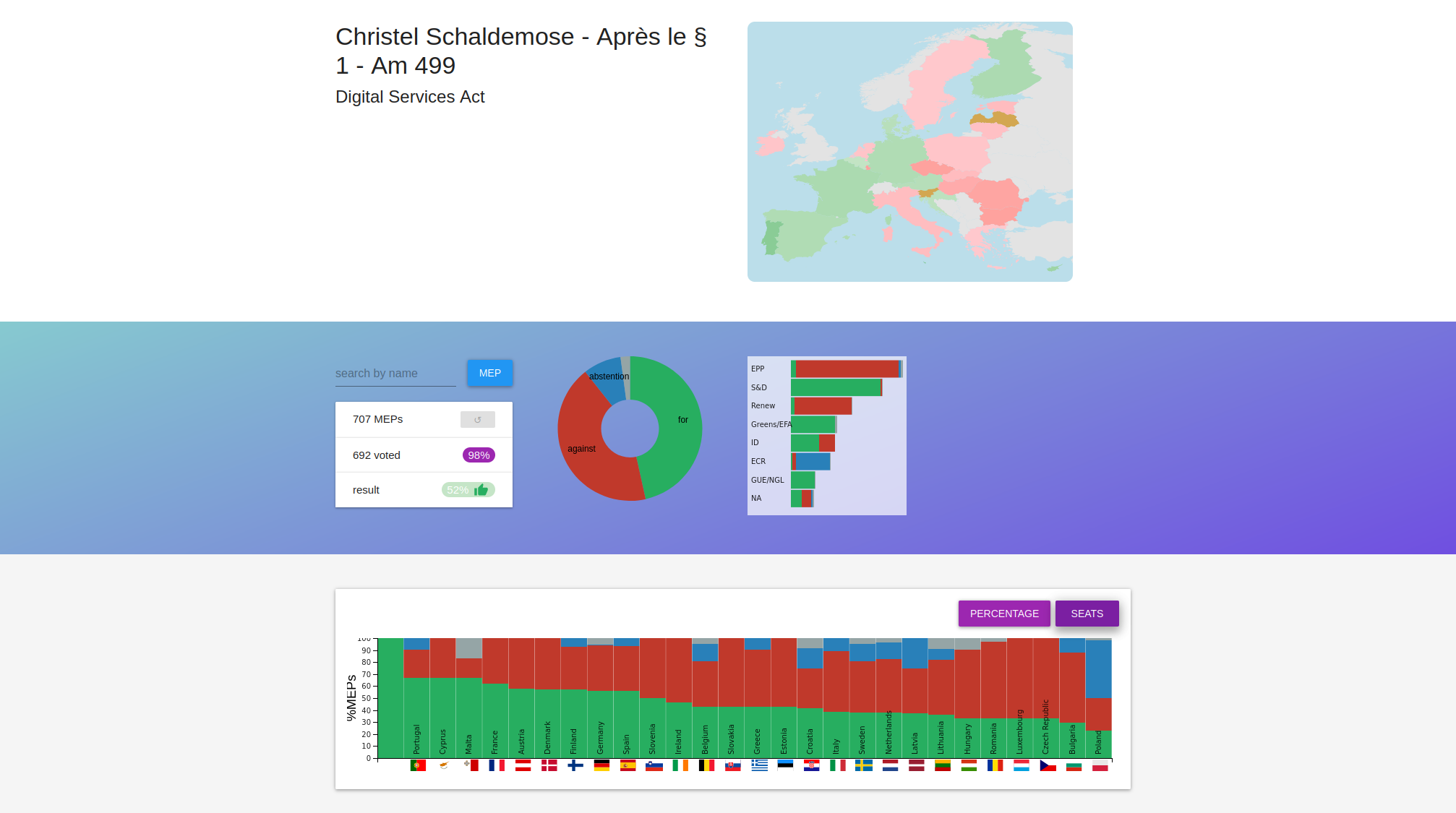

The Platform Power campaign is focused on three main issues around the Digital Services Act. The Digital Services Act (or DSA) is a piece of legislation that aims to regulate and consolidate various separate pieces of EU legislation that address illegal or harmful online content, and the provision of digital services across the EU. In April 2022 a political deal was achieved on the DSA, and on 4 July this will be sealed by the EU Parliament.

We work on a coalition of 12 organisations (more to follow!) and coordinate actions to have a Digital Services Act done right! The European Youth Forum (YFJ) is one of them. YFJ works with youth rights to make sure that young people are empowered and encouraged to achieve their fullest potential as global citizens.

As the biggest youth platform in the world, representing over 10 million young Europeans, bringing YFJ into the Platform Power coalition means adding youth voices to some of the most important discussions of our time.

Why is the DSA important for young people?

Today’s young people are the first generation whose entire lives are encoded in digital data. As the biggest group of users on social media platforms, young people are most affected by changes to the way the online world is managed.

In fact, 75% of young people reported wanting to know how their data is used when they use their social media accounts to access other websites and 90% of young people in the EU would find it useful to know their digital rights. However, young people’s rights often tend to fall under the radar. Strict rules that are in place to protect children suddenly fall away for 18-year-olds and above, exposing young people to a deliberately confusing landscape of data extraction, unwanted content and cyberbullying.

Far from the common belief of being “digital natives”, young people are not always aware of the harms facing them in the online world. Whilst the majority are at ease using social media apps and entertainment platforms, this does not necessarily translate into an innate knowledge of how to browse safely, how algorithms function or how to protect oneself online.

In fact, 60% of young people surveyed do not believe that social media companies know their ethnicity, sexual orientation, religion or political beliefs. Yet personal information about young people is being inferred by data profiling all the time, resulting in a risk of discrimination.

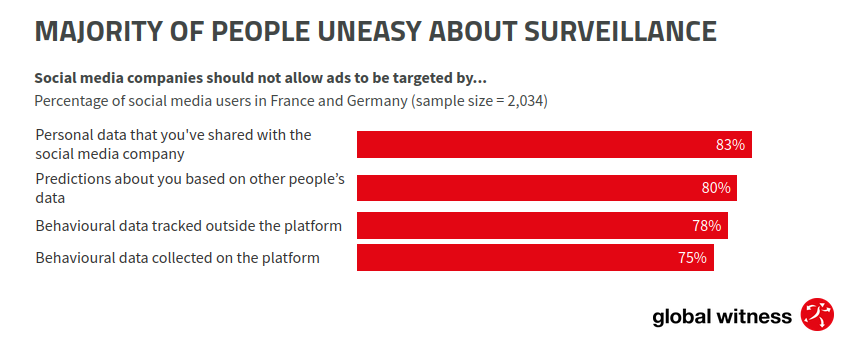

Young people are not in favour of targeted advertising. These are invasive, manipulative and triggering ads based on our personal data. However, we know that Meta’s algorithms target young people with personalised ads that exploit users’ mental vulnerabilities such as trauma.

While we are all affected by this, not all young people experience the digital space in the same way. LGBTQ+ people, young activists, young women and people of colour are often more vulnerable to these downfalls. Big Tech companies use algorithms and surveillance ads that make us stay longer on their platforms. These are generally focused on polarisation and misinformation. All of this – surveillance ads, algorithms that shape what we see or not on the internet and deceptive design practices- reduce our ability to organise among young people for the causes we care about, such as climate change, social justice, access to fair remuneration and employment, democratic engagement, etc.

Big Tech companies should not have a free playground to decide young people’s lives and future.

Young people should have the power to choose what they want to see online, and not to be drawn into manipulative design practices that lull us into doing or buying things we didn’t want. When young people don’t have this power, their life’s decisions are reduced and their opportunities are diminished.

What needs to be done?

Young people should have the power and right to choose from a world of opportunities and discovery on the internet and in their digital lives, not be subject to data extraction, manipulation and illegal content.

The DSA is a good first step. Now the implementation of this law and follow-up recommendations need to stay true to the intentions behind this legislation.

We now need:

- a real end to surveillance ads for all

- rights-respecting content governance, in all languages used on the platform

- limits to algorithmic recommendations pushing young people to act in ways that do not reflect their true wants or needs

- proper enforcement of laws

- enough political will from our decision-makers

- Big Tech taking the law seriously

- people looking for alternatives to BigTech platforms.

We are excited to work together and make sure that the implementation of the Digital Services Act empowers young people!

Join us in this movement, and make digital spaces a space you can flourish and thrive in.

(Contribution by: Maria Belén Luna Sanz, Campaigns Officer, EDRi and Lauren Mason, Policy and Advocacy Manager, European Youth Forum)